10 key considerations for platforms preparing for the 2024 elections

February 7, 2024 | UncategorizedAs the world gears up for a pivotal election season in 2024, with critical ballots set in the United States, the United Kingdom, and many other nations, any online platform that caters to user-generated content should be braced for an unprecedented influx of political content. Any election period is marked by vigorous debates, fervent campaigning, and heightened public engagement, but in 2024 the stakes are even higher, presenting unique challenges and opportunities for content moderation.

As veteran content moderators, we’ve experienced many election seasons, and in this guide, we’ll share some of the lessons and strategies we’ve learned that help keep our favorite online platforms a credible and safe space for political discourse.

1. Surge in user activity

During election seasons, content moderation faces the unique challenge of managing a significant increase in user activity. Campaign seasons are marked by an influx of diverse forms of political content, from text, images (including memes) to videos. The real-time nature of political discussions necessitates quick and accurate moderation to maintain platform integrity, especially in countering misinformation and upholding community guidelines.

To prepare for this, a combination of AI and human moderation is essential. At WebPurify, we deploy advanced AI tools for initial content analysis, which enables us to efficiently handle the bulk of this content by identifying patterns indicative of guideline violations.

However, the nuanced nature of political discourse requires the discerning judgment of human moderators, particularly for context-sensitive content. Scaling up human resources and providing specialized training for election-related content moderation becomes crucial in these situations.

Continuous training and updates for both AI and human moderators are key to keeping pace with evolving political content and communication trends. Stress testing the systems also ensures they can handle increased loads. This comprehensive approach ensures a balanced and responsive content moderation process during the high-stakes election period.

2. Combating misinformation and disinformation

Misinformation and disinformation pose huge challenges during election seasons, as they can skew public perception and influence voter behavior. The responsibility of content moderators intensifies as they work to identify and manage false or misleading information circulating online.

Our moderation strategy combines human insight with AI efficiency. While AI helps identify hate speech and inflammatory content, it’s our human moderators who tackle the complex challenge of misinformation, using tools like keyword lists for support. Given the sophisticated nature of false information, human judgment is essential in discerning and addressing misleading content, ensuring our online environments remain trustworthy and accurate.

Human moderators, with their ability to understand context and subtle nuances, are essential in this process for reviewing flagged content and making informed decisions about its accuracy and intent.

An often understated part of this process, but one that proves effective, is when platforms clearly communicate their policies on misinformation and the actions taken when such content is identified and downranked, labeled, or removed.

Notifying users when they’ve interacted with or shared misleading content and providing them with accurate information or context goes a long way in stopping the spread of misinformation.

3. Political advertisements

The election season sees a dramatic increase in political advertisements across the digital platforms that allow them. These platforms then face the complex job of ensuring these ads comply with platform policies, legal standards, and transparency and disclosure guidelines, while also maintaining a fair and balanced public discourse. This is where working with vendor partners like WebPurify can be advantageous.

Our content moderation teams play a crucial role in maintaining the integrity and transparency of political advertising on online platforms. Firstly, our moderators are trained to recognize the diverse forms political ads can take, from references to candidates, political parties, and elections, to advocacy for or against political entities or ballot measures.

Once these types of political content are identified, moderators will then label it as a political ad. This labeling process enables platforms to certify advertisers as political entities, subjecting them to a higher standard of disclosures, targeting, and transparency requirements.

Additionally, our moderators are trained to scrutinize the targeting parameters of ads to confirm their compliance with sensitive targeting requirements, which includes ensuring that ads do not inappropriately target users based on their political affiliations or beliefs. This means providing clear disclosure of who is paying for the ad and ensuring that the funding sources comply with legal requirements.

Moderators will also enforce rules around ‘micro-targeting’, ensuring that political ads do not unfairly target specific demographics with potentially divisive or manipulative messages.

By enforcing these standards, we help hold advertisers accountable and uphold the principles of fairness and transparency in political advertising. This approach not only supports a more informed electorate but also reinforces the responsibility of advertisers to engage in ethical practices.

Firstly, our moderation teams are trained in distinguishing between legitimate political messaging and content that could be misleading or violate advertising policies. This requires a detailed understanding of political contexts and the nuances of electoral regulations, which can vary significantly by region.

Human moderators with expertise in political content and regional laws are essential for making the final call on whether an ad should be allowed, modified, or rejected.

4. Hate speech and harassment

Sadly, election periods often see a spike in online hate speech and harassment, as political debates intensify and become more polarized. Platforms then face the urgent task of identifying and mitigating such content to maintain a safe and respectful environment.

The primary challenge many platforms face is the nuanced nature of hate speech and harassment, which can often be subtle or context-dependent. The complexity of human language and the subtleties of political discourse mean that AI cannot reliably identify all instances of hate speech and harassment, across multiple languages.

When working with vendor partners like WebPurify, our moderators are trained to distinguish between passionate political debate and content that crosses the line into abuse or incitement to violence. This involves understanding cultural and contextual nuances and being vigilant against coordinated harassment campaigns targeting specific individuals or groups.

To help prevent this, platforms should review and, where necessary, update their community guidelines ahead of elections, clarifying what constitutes unacceptable behavior. User education campaigns can also be effective, informing the community about the importance of respectful discourse and the consequences of violating community standards.

Adding to these measures, in-product prompts can also be helpful as a direct intervention tool, nudging users to reconsider their intended post in real-time. This immediate feedback loop not only encourages users to reflect on the nature of their contributions but also serves as an active reminder that there are community guidelines and they are being enforced.

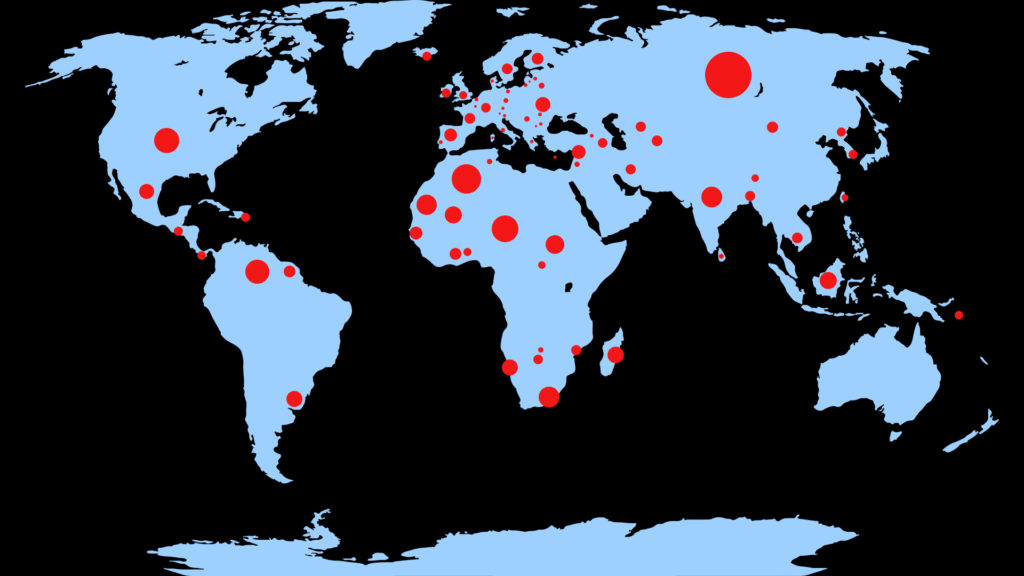

5. Maintaining a global perspective

Election seasons present unique challenges for content moderation across the globe, with each country having distinct political, cultural, and legal landscapes. For platforms with a global reach, having a diverse group of policy makers and process designers is crucial.

These individuals bring a wide range of cultural understandings and legal insights, enabling them to create moderation guidelines that are both globally aware and locally relevant. This diversity ensures that the nuances of political, cultural, and legal landscapes are considered, even if the moderation execution is centralized.

For instance, what’s deemed acceptable in one cultural context might be sensitive or controversial in another, highlighting the need for policies that are adaptable and sensitive to these differences.

6. User education and transparency

During election seasons, it’s vital for platforms to not only manage content but also focus on user education and transparency. Platforms can play a proactive role in educating users on how to identify misinformation, emphasizing the importance of checking sources and understanding context.

Additionally, clear communication about the platform’s community guidelines, especially those pertaining to political content, is essential in setting user expectations. Encouraging critical thinking and media literacy through techniques like prebunking, or preemptive debunking, among users also contributes to a more informed and discerning online community.

Along with education, transparency in content moderation is equally important. Platforms should openly communicate their content moderation policies, particularly how political content is being handled.

This includes providing insights into the decision-making process, detailing the roles of AI and human moderators, and explaining the actions taken against misinformation, such as partnerships with fact-checkers or labeling strategies. Furthermore, establishing feedback mechanisms and a clear appeal process for moderation decisions reinforces trust and accountability.

By combining user education with a transparent approach to content moderation, platforms can not only maintain the integrity of the platform but also support the broader democratic process by ensuring a well-informed public discourse.

7. AI governance

As AI becomes increasingly integral to content moderation, especially during elections, AI governance is a critical concern. With some measures spelled out in President Biden’s Executive Order on AI, this broadly involves implementing policies requiring disclosures and labels on AI-generated content, such as digital watermarks. However, as we’ve discussed in the past, there are no simple solutions and even watermarks can be edited.

But overall, platforms should be striving for better transparency as explained above to help users better discern between human-generated content and that created by AI, particularly manipulated or synthetic media, which are becoming more sophisticated.

The challenge lies in accurately identifying AI-generated content and ensuring that labels are clear and understandable to the average user.

8. Making credible content more discoverable

To counteract the spread of misinformation, online platforms should also be thinking about ways to make credible content more discoverable. This might involve labeling profiles and posts of official candidates and verified news sources.

Additionally, platforms can proactively surface essential election-related information, like voter registration deadlines, within their interface.

Platforms can use algorithms to prioritize credible sources and in-product features that highlight important civic information, thereby playing a significant role in shaping an informed electorate.

Such initiatives not only help combat misinformation but also provide users with easy access to reliable, authoritative information.

9. Third-party fact-checking

Collaboration with third-party fact-checkers, including academic experts and seasoned journalists, adds another critical layer of verification to content moderation. These partnerships are particularly valuable during elections when misinformation is rife.

Fact-checking organizations, such as NewsGuard, bring specialized skills in verifying complex claims and enhancing the accuracy of information that’s disseminated online. Integrating their insights into the moderation process involves a combination of automated flagging of potentially false content and expert review, providing a robust response to the challenges of misinformation and disinformation.

10. Targeted threat intelligence for election integrity

Finally, it must be remembered that safeguarding election integrity extends beyond physical polling stations and starts in the vast and intricate channels of online platforms. Targeted threat intelligence plays a crucial role in this endeavor, especially in identifying and countering sophisticated disinformation campaigns that may be orchestrated by external entities.

This involves deploying specialized teams, possibly in collaboration with external cybersecurity experts, to analyze content and user behavior patterns. By monitoring these signals, platforms can detect coordinated attempts to manipulate political discourse, spread misinformation, or sow discord among the electorate.

These teams use a blend of digital forensics, cyber intelligence tools, and data analysis techniques to discern the nuances of such campaigns. The challenge lies in distinguishing between legitimate political expression and malicious activities designed to undermine the democratic process. But, again, this is where the combination of human moderators paired with AI tools for scale becomes hugely important.

Once identified, swift action should be taken to mitigate these threats, including removing content, blocking accounts, or flagging information for users. Taking a proactive approach like this not only helps maintain the integrity of the electoral process but also reinforces user trust in the platform as a credible source of information and discourse.